Speech Assessment: Methods and Applications for Spoken Language Learning...

-

Upload

clement-obrien -

Category

Documents

-

view

252 -

download

0

Transcript of Speech Assessment: Methods and Applications for Spoken Language Learning...

Speech Assessment: Methods andApplications for Spoken Language Learning

語音評分的方法、應用與分享

J.-S. Roger Jang ( 張智星 )

http://www.cs.nthu.edu.tw/~jang

Multimedia Information Retrieval Lab

CS Dept, Tsing Hua Univ, Taiwan

Outline

Introduction to speech assessmentMethodsUsing learning to rank for speech assessmentDemosConclusions

Intro. to Speech Assessment

Goal Evaluate a person’s utterance based on some

acoustic features, for language learning

Also known as Pronunciation scoring CAPT (computer-assisted pronunciation training)

Four Aspects of Language Learning

Receptive Skill

Productive Skill

SpeechListening(聽)

Speaking(說)

TextReading(讀)

Writing(寫)

Easier for CALL Harder for CALL

SA!Media

Skills

Speech Assessment

Characteristics of ideal SA Assessment levels: as detailed as possible

Syllables, words, sentences, paragraphs

Assessment criteria: as many as possibletimbre, tone, energy, rhythm, co-articulation, …

Feedbacks: as specific as possibleHigh-level correction and suggestions

Basic Assessment Criteria

Timber ( 咬字 / 音色 ) Based on acoustic

models

Tone ( 音調 / 音高 ) Based on tone

recognition (for tonal language)

Based on pitch similarity with the target utterance

Rhythm ( 韻律 / 音長 ) Based on duration

comparison with the target utterance

Energy ( 強度 / 音量 ) Based on energy

comparison with the target utterance

Additional Assessment Criteria

English Stress ( 重音 )

Levels (word or sentence)

Intonation ( 整句音調 )Declarative sentenceInterrogative sentence

Co-articulation ( 連音 ) A red apple.Did you call me?Won’t you go?Raise your hand.

Mandarin Tone ( 聲調 ) Retroflex ( 捲舌音 ) Co-articulation ( 連音 ) 兒化音

Others Pause

Types of SA

Types of SA (ordered by difficulty) Type 1: 有目標文字、有目標語句 Type 2: 有目標文字、無目標語句 Type 3: 無目標文字、有目標語句 Type 4: 無目標文字、無目標語句

We are focusing on type 1 and 2.

第一類:有目標文字、有目標語句 方法:

以語音辨識核心為基礎,進行語音和文字的強制對位( Forced Alignment, FA),再根據每一個 Phone的相似度來進行評分

評分方式 音色:和語音辨識核心的語音模型比對 音調、韻律、強度:和目標語句比對

特性: 由於 FA的準確度很高,因此比較容易得到一致性較高的評分結果

範例: myET (艾爾實驗室 ): www.myet.com Saybot (說寶堂 ): www.saybot.com

第二類:有目標文字、無目標語句 方法:

以語音辨識核心為基礎,進行語音和文字的強制對位( Forced Alignment),再根據每一個 Phone的相似度來進行評分

評分方式 音色:和語音辨識核心的語音模型比對 音調:對於中文,可以經由文字處理來得到標準音調,再由語音進行

音調辨識與評分。英文則無類似方法。 韻律、強度:無法比對

特性: 由於 FA的準確度很高,因此比較容易得到一致性較高的評分結果 教材準備較容易 但無法對韻律及音量進行評分

範例: 階梯英文的 speak & score

第三類:無目標文字、有目標語句

方法: 以語音辨識核心為基礎,進行語音的自由音節解碼( Free Syllable Decoding, FSD),再根據每一個音節字串的相似度來進行評分。

評分方式 音色:和目標語句音節字串進行比對 音調、韻律、強度:由 FSD產生的音節來比對

特性: 由於 FSD的辨識率只有 6 ~ 7 成,因此比較難得到一致的評分結

果。 也可以直接改用 DTW來進行比對,但由於個人音色差異,評分的

一致性較低。

Our Approach

Basic approach to timbre assessment Lexicon net construction (Usually a sausage net) Forced alignment to identify phone boundaries Phone scoring based on several criteria, such as

ranking, histograms, posterior prob., etc. Weighted average to get syllable/sentence scores

Lexicon Net Construction

Lexicon net for “what are you allergic to?” Sausage net with all possible (and correct)

multiple pronunciations Optional sil between words

Lexicon Net with Confusing PhonesCommon errors for

Japanese learners of Chinese ㄖㄌ

例:天氣熱天氣樂 ㄑㄐ

例:打哈欠 打哈見 ㄘㄗ

例:一次旅行一字旅行 ㄢㄤ

例:晚安晚ㄤ

Rule-based approach to creating confusing syllables (phonological rules!) Rules:

Rule 1: re leRule 2: qi ji Rule 3: ci zi Rule 4: an ang

Example欠 (qian)見 (jian) 、嗆

(qiang) 、降 (jiang)

Example of Japanese Learners Speaking Chinese

去年夏天熱死了 Example 1 Example 2

晚安 Example 1 Example 2

坐下來、慢慢吃 Example 1

他不住的打哈欠 Example 1

一次旅行 Example 1

起風 Example 1

休息 Example 1

Lexicon Net with Confusing Phones

Lexicon net for “ 天氣熱、打哈欠”Canonical form: tian qi re da ha qian16 variant paths in the net:

欠

見

嗆

降

氣

記

熱

樂

Automatic Confusing Syllable Id.

強制對位以得到初步切音結果

對華語 411音節進行比對以找出每個音的混淆音

將混淆音節加入辨識網路再進行強制對位及切音

切音結果不再變動? YesNo 輸出混淆音節及辨識網路

Corpus of Japanese learnersOf Chinese

Error Pattern Identification (EPI)

Common insertions/deletions from users以「朝辭白帝彩雲間」為標準語句

• 任意處結束,例如「朝辭白帝」• 任意處開始,例如「彩雲間」• 任意處開始與結束,例如「白帝彩雲」• 任意處開始與結束,並出現跳字,例如「白彩雲」• 疊字,例如「朝…朝辭白帝彩雲間」• 疊詞,例如「朝辭…朝辭白帝彩雲間」• 疊字加換音,例如「朝( cao )…朝( zhao )辭白帝彩雲間」• 兩字對調,例如「朝辭彩帝白雲間」• 錯字,例如「朝辭白帝黑山間」

Lexicon Net for EPI (I)

偵測「從頭開始、在任意處結束」的發音

Lexicon Net for EPI (II)

偵測「從任意處開始,在尾端結束」的發音

Lexicon Net for EPI (III)

偵測「從任意處開始,結束於任意處(但不可跳字)」的發音

Lexicon Net for EPI (IV)

偵測「從任意處開始,結束於任意處,而且可以跳字)」的發音

Design Philosophy of Lexicon Nets

We need to strike a balance between recognition and lexicon In the extreme, we can have a net for free syllable

decoding to catch all error patterns. The feasibility of free syllable decoding is offset

by its not-so-high recognition rate.

Scoring Methods for Speech Assessment

Five phone-based scoring methods Duration-distribution scores (durDis) Log-likelihood scores (hmmLike) Log-posterior scores (hmmPost) Log-likelihood-distribution scores (likeDis) Rank ratio scores (rkRatio)

All based on forced alignment to segment phones

Method 1: Duration-distribution Scores

PDF of phone duration Obtained from forced alignment Normalized by speech rate Fitted by log-normal PDF Max PDF score 100

Method 2: Log-likelihood Scores

Log-likelihood of phone with duration of frames :

where is the likelihood of the frame with the observation vector

10

0

|log1ˆ

dt

ttit qyp

dl

iq d

it qyp | tty

Method 3: Log-posterior Scores

Log-posterior of phone with duration :

where

10

0

|log1

ˆdt

ttti yqP

d

iq d

m

jjjt

iitti

qPqyp

qPqypyqP

1

|

||

Method 4: Log-likelihood-distribution Scores

Use CDF of Gaussian for log-likelihoodCDF = 1 score = 100

Method 5: Rank Ratio ScoresRank ratio

RR to score conversion

where parameters a, b are phone specific.

Possible sets of competing phones for x+y *+y *+*

1#

1

phonescompetingof

qrankqrr jj

bj

j

a

qrrbaqscore

1

100,;

Examples of Rank Ratio Scores

0.5 1 1.5 2 2.5 3-1

-0.5

0

0.5

1

C:/Users/jang/AppData/Local/Temp/tpd41ff40f_68c1_4124_8e25_bfc94ff40b39.wav

Sco

re=

91.4

9

df=

[0 0

0 0

0 0

0]

(sil)-1

-1

(yi)一100

i100

(cun)寸83

c50

u100

nn100

(xiang)想100

x100

i100

a100

ng100

(si)思63

s13

ii100

(sil)-1

-1

(yi)一100

i100

(cun)寸100

c100

u100

nn100

(hui)灰100

h100

u100

e100

i100

(sil)-1

-1

0.5 1 1.5 2 2.5 3

60

70

80

Pitc

h

Pitch1: unbroken

Pitch2: segmented

Demo of Our Prototype

ASR toolbox http://mirlab.org/jang/matlab/toolbox/asr

Command: goDemoSa.m

Item 7

Intro. to Learning to Rank

Learning to rank A supervised learning algorithm which generates a

ranking model based on a training set of partially order items. (A task somewhat between classification and regression.)

Item 2

Item 1

Item 7

Item 3

Item 9Rank functionItem 9

Item 3

Orderedbypreference

Learning to Rank: Methods and App.

Methods Pointwise (e.g., Pranking) Pairwise (e.g., RankSVM, RankBoost, RankNet) Listwise (e.g., ListNet)

Applications Webpage ranking Machine translation Protein structure prediction

Application of LTR to SA

Why use LTR for SA? Human scoring is rank-based

Tsing Hua’s grading system is moving from scores (0~100) to ranks (A, B, C, D…).

Combination of features (scores)Features are complementary.

Effective determination of rankingLTR only generates numerical output with a ranking order

as close as possible to the correct order. A optimum DP-approach is proposed.

LTR Score Segmentation

nssss ,,, 21 nrrrr ,,, 21

121 ,,, m

Rank 1 Rank 2

1 2 3 4

Rank 3 Rank 4 Rank 5

Given: LTR scores

We want to find the separating scores

:,s2r swith score-to-rank function

Such that

n

iii srsrJ

1

2 is minimized.

s

(sorted)

Desired rank

LTR Score Segmentation by DP (I)

Formulate the problem in DP framework Optimum-value function D(i,j): The minimum

cost of mapping to rank Recurrent equation

Boundary condition: Optimum cost:

isss ,,, 21 j,,2,1

)1,1(),,1(min),( jiDjiDjrjiD i

],1[,),1( 1 mjjrjD

mnD ,

LTR Score Segmentation by DP (II)

1

2

3

Desiredrank

Computedrank

)1,1(

),1(min||),(

jiD

jiDjrjiD i

jiD ,

1,1 jiD

jiD ,1Recurrent formula:

Local constraint:

4

5

2r 3r 4r 5r 6r 7r 8r 9r 10r 11r 12r 13r1r

232

1

ss

298

3

ss

276

2

ss

21211

4

ss

LTR Score Segmentation with DP (III)

50 100 150 200 250

2

4ve

c1

4 21

1.5

2

2.5

3

3.5

4

4.5

5

vec250 100 150 200 250

1

1.5

2

2.5

3

3.5

4

4.5

5DP total distance = 23

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 5.50

1

2

3

4

5

6

x1

Cla

ss

Data distribution: DP path:

Flow Charts of Our Experiment

Corpora for Experiments

WSJ08000 training utterances, 84 speakers. For training

biphone acoustic models for forced alignment

MIR-SDRecordings of about 4000 multi-syllable English words

by 22 students (12 females and 10 males.) with an intermediate competence level.

Originally designed for stress detectionAvailable at http://mirlab.org/dataSet/public

Human Scoring of MIR-SD

Human scoring Only 50 utterances from each speaker of MIR-SD

are scored by 2 humans, making a total of 1100 utterances

Human scoring are consistent:Correlation Inter-rater HR1-GT HR2-GT

Word-based 0.58 0.84 0.89

Speaker-based 0.78 0.96 0.93

Score 1 2 3 4 5Frequency 110 198 259 409 124Percentage 10% 18% 24% 37% 11%

Examples of MIR-SD

Level 5 apparent, paragraphic, constellation

Level 3 additive, timorous, availably

Level 1 ambiguity, auxiliary, anachronism

Performance Indices

Performance indices used in the literature hr = [1 3 5 4 2 2], cr = [2 3 5 2 1 4]

Recognition rate rRate = 33.33%Recognition rate with tolerance 1 = 66.67%Average absolute difference = 1Correlation coef = 0.54

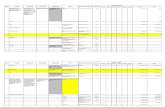

Performance Evaluation of Different Scoring Methods

Raw score

DP-based k-means

inside outside inside outside

durDis

Corr 0.209 0.217 0.189 0.202 0.194rRate 0.342 0.309 0.281 0.276

rRateT1 0.783 0.771 0.701 0.696AADiff 0.906 0.942 1.109 1.122

hmmLike

Corr 0.120 0.168 0.102 0.144 0.154rRate 0.325 0.306 0.258 0.255

rRateT1 0.780 0.757 0.692 0.689AADiff 0.928 0.973 1.158 1.165

hmmPost

Corr 0.084 0.297 0.265 0.192 0.216rRate 0.344 0.330 0.170 0.162

rRateT1 0.811 0.798 0.565 0.561AADiff 0.862 0.893 1.494 1.499

likeDis

Corr 0.141 0.160 0.125 0.141 0.143rRate 0.316 0.308 0.247 0.247

rRate T1 0.789 0.774 0.665 0.671AADiff 0.924 0.948 1.207 1.203

rkRatio

Corr 0.240 0.232 0.198 0.229 0.236rRate 0.333 0.316 0.269 0.268

rRateT1 0.789 0.779 0.699 0.698AADiff 0.898 0.929 1.120 1.124

LTR Combination of Scores

Features for LTR durDis and rkRatio: raw scores hmmLike, hmmPost, likeDis: DP segmentation

LTR RankSVM Linear kernel

Baseline hmmPost with DP-based segmentation

Overall Performance Comparison

Legends Score segmentation

Circles: DPTriangles: k-means

Inside/outside testsSolid lines: InsideDashed lines: Outside

Black lines: Baselines

Summary of the Experiment

Segmentation DP (supervised learning) is betten than k-means

(unsupervised learning)

Performance indices Correlation coefficient is not intuitive (consider [4

5 4] and [1 2 1]) Recog. rate and sum of abs. diff. can be optimized

by LTR and DP segmentation

Demo: Practice of Mandarin Idioms of Length 4 ( 一語中的 )

Level (difficulty) of an idiom is based on it’s freq. via Google search:孤掌難鳴 ===> 260,000鶼鰈情深 ===> 43,300亡鈇意鄰 ===> 22,700舉案齊眉 ===> 235,000

Can be adapted for English learning

Next step: multi-threading, fast decoding via FSM

Demo: Recitation Machine(唸唸不忘)

Support Mandarin & English

Support user-defined recitation script

Next step: multithreading for recording & recognition

Licensing for PC Applications

For Mandarin, English, Japanese

SA for Embedded Systems

Embedded platforms: PMP, iPhone, Androids

Demo: Tangible Companions

Chicken run (落跑雞)

Penguin for Tang Poetry (唐詩企鵝)

Robot Fighter (蘿蔔戰士)

Singing Bass & Dog (大嘴鱸魚和唱歌狗)

Tools and Tutorials

Tools DCPR toolbox

http://mirlab.org/jang/matlab/toolbox/dcpr

SAP toolboxhttp://mirlab.org/jang/mat

lab/toolbox/sap

ASR Toolboxhttp://mirlab.org/jang/mat

lab/toolbox/asr

Tutorials Data clustering and

pattern recognition:http://mirlab.org/jang/boo

ks/dcpr

Audio signal processinghttp://mirlab.org/jang/

books/audioSignalProcessing

Lab page (with demos):http://mirlab.org

Other SA Issues to be Addressed

Core technology Other acoustic features for

scoringPitch (tone/intonation),

volume, duration, pause, coarticulation

Error pattern identification Credit assignment for

sentence-level scores Lack of labeled corpora!

Application side Mulimodal GUI Extensions

Slightly adaptationParagraph-level SAText-free SA

Beyond pronunciationTranslation + recognition

+ assessment

Microphone types

Examples

Coarticulation Knock it off! Mom woke her up

Consonant+consonant Bus stop Push Shirley Ask question Jeff flew south through

Tainan Exception

Change jobsWhich chair

Ref: “和英文系學生一起上英語聽說課” , 黃玟君老師

Examples

Changes due to coarticulation Would you like it? Won’t you go? Raise your hand. It makes you look

younger.

Softened sounds Junction Popcorn Fruitful

Can and can’t I can read the letter. I can’t read the letter.

d and t Better Cider

Most Likely to be Mispronounced

Within Taiwan Pleasure/pressure World/war/word Shirt/short Walk/work Flesh/fresh Supply/surprise Some/son Confirm/conform

Cancel/cancer Mouth/mouse Measure/major Police/please Version/virgin

Conclusions

Conclusions SA calls for more cues than ASR SA requires techniques from ML/IR Multi-modal approach to SA is a must

“Popcorn”, “Thursday”

On-going & future work Tone recognition & assessment Reliable error pattern identification

References Witt, S. M. and Young, S. J., “Phone-level Pronunciation Scoring and Assessment for Interactive Language Learning”, Speech

Communication 30, 95-108, 2000. Kim, Y., Franco, H., and Neumeyer, L., “Automatic Pronunciation Scoring of Specific phone Segments for Language

Instruction”, in Proceedings of the 4th European Conference on Speech Communication and Technology (Eurospeech ’97), pp. 649-652, Rhodes, 1997.

Neumeyer, L, Franco, H., Digalakis, V., and Weintraub, M., “Automatic Scoring of Pronunciation Quality”, Speech Communication 30, 83-93, 2000.

Franco, H., Neumeyer, L., Digalakis, V., and Ronen, O., “Combination of Machine Scores for Automatic Grading of Pronunciation Quality”, Speech Communication 30, 121-130, 2000.

Cincared, T., Gruhn, R., Hacker, C., Nöth, E., and Nakamura, S., “Automatic Pronunciation Scoring of Words and Sentences Independent from the Non-Native’s First Language”, Computer Speech and Language 23, 65-88, 2009.

Crammer, K. and Singer, Y., “Pranking with Ranking”, in proceedings of the conference on Neural Information Processing Systems (NIPS), 2001.

Joachims, T., “Optimizing Search Engines using Clickthrough Data”, in proceedings of the ACM Conference on Knowledge Discovery and Data Mining (KDD), ACM, 2002.

Freund, Y., Iyer, R., Schapire, R. E., and Singer, Y., “An Efficient Boosting Algorithm for Combining Preferences”, in proceedings of ICML, pp170-178, 1998.

Burges, C., Shaked, T., Renshaw, E., Lazier, A., Deeds, M., Hamilton, N., and Hullender, G., “Learning to Rank using Gradient Descent”, in proceedings of ICML, pp. 89-96, 2005.

Cao, Z., Qin, T., Liu, T. Y., Tsai, M. F., and Li, H., “Learning to Rank: From Pairwise Approach to Listwise Approach”, in proceedings of the 24th International Conference on Machine Learning, pp. 129-136, Corvallis, OR, 2007.

Liang-Yu Chen , Jyh-Shing Roger Jang, “Automatic Pronunciation Scoring using Learning to Rank and DP-based Score Segmentation”, submitted to Interspeech 2010.