Least angle regression - AaltoLARS Least Angle Regression Start with empty set Select xj that is...

Transcript of Least angle regression - AaltoLARS Least Angle Regression Start with empty set Select xj that is...

Linear problems

xi are predictor variables and yi is theresponse

Find βj which minimize squared error

∑

i(yi − µ̂i)2

where µ̂i =∑

j βjxij

Ordinary least squares: β = (XXT )−1XTy

Let’s assume that y and all xi are centered tozero and xi have unit length

– p.2

Forward selectionForward selection

Start with empty modelSelect variable most correlated with outputLinear regression from the variable to y

Project other predictors orthogonally to theselected variableRepeat

Usually overly greedy

Eliminates variables correlated with selectedones

– p.3

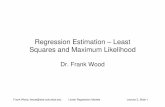

LARS compared toother algorithms

Least Angle Regression (LARS) ”less greedy”than ordinary least squares

Two quite different algorithms, Lasso andStagewise, give similar results

LARS tries to explain this

Significantly faster than Lasso and Stagewise

– p.4

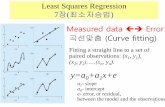

LassoLasso is a constrained version of OLS

min∑

i(yi − µ̂i)2

subject to∑

j |βj| ≤ t

Can be solved with quadratic optimization orwith iterative techniques

Parsimonious: βj 6= 0 only for some j

Increasing t selects more variables

– p.5

Stagewise regression

Forward stagewise linear regressionChoose xj with highest current correlationcj = xT

j (y − µ̂)

Take a small step 0 < ǫ < |cj| in thedirection of selected xj

µ̂← µ̂ + ǫ · sign(cj) · xj

Repeat

”Large” step size of |cj| would lead to ordinaryleast squares

– p.6

Non-linear extensionStagewise idea can be easily expandednon-linearly

BoostingFit regression tree to residualsFinds the most correlated treeTake a small step in the direction of fittedvaluesRepeat

– p.7

Diabetes dataMain example in the paper

n = 442 patients

10 variables: age, body mass index, bloodpressure, serum measurements, . . .

Response variable is ”a quantitative measureof disease progression one year later”

Variable selection problem: which of thevariables affect the disease

– p.8

Comparsion of Lassoand Stagewise

– p.9

LARSLeast Angle Regression

Start with empty setSelect xj that is most correlated withresiduals y − µ̂

Proceed in the direction of xj until anothervariable xk is equally correlated withresidualsChoose equiangular direction between xj

and xk

Proceed until third variable enters theactive set, etc

Step is always shorter than in OLS– p.10

Geometricalpresentation

– p.11

Computing LARS

Every step a new variable enters the activeset⇒ no more steps than variables

New dicrection can be solved with linearalgebra

Step length by iterating over all variables notin active set

By cleverly updating estimates from previousiteration, the computation cost will becomparable to OLS

– p.12

LARS results

– p.13

Lasso modificationLARS can be modified to give Lasso solution

In the Lasso algorithm signs of the βj and cj

must agree

Take only as long LARS step as possiblewithout changing the sign

This works, if only one variable at a timeenters the active set

Unlike LARS, variables can be removed fromactive set

– p.14

Stagewisemodification

The Stagewise step is positive combination ofactive set variables

LARS has no sign restrictions on the directionvector

Project LARS vector to positive convex cone

This leads to Stagewise solution (assumingStagewise step size→ 0)

– p.15

Other modificationsLARS/OLS hybrid

Select the model with LARS, but theparameter values with OLS

Main effects firstRun LARS until most important variblesare in the active setRestart with predictor variables replaced byinteraction terms between already selectedvariables

– p.16

Stopping criteria

When to stop?

Cp(µ̂) is an unbiased estimator of E(

||µ̂−µ||2

σ2

)

Simple formula for Cp in the kth LARS step:

Cp(µ̂k) = ||y − µ̂k||2/σ̄2 − n + 2k

– p.17

Summary

Lasso and Stagewise can be seen asmodifications of LARS

This explains similar results

LARS is more efficient to compute

Other modifications

– p.18