Comput Geosci (2010) 14 301–310

Transcript of Comput Geosci (2010) 14 301–310

-

7/29/2019 Comput Geosci (2010) 14 301310

1/10

Comput Geosci (2010) 14:301310

DOI 10.1007/s10596-009-9154-x

ORIGINAL PAPER

Textural identification of basaltic rock mass usingimage processing and neural network

Naresh Singh T. N. Singh Avyaktanand Tiwary

Kripa M. Sarkar

Received: 25 December 2008 / Accepted: 17 August 2009 / Published online: 9 September 2009 Springer Science + Business Media B.V. 2009

Abstract A new approach to identify the texture based

on image processing of thin sections of different basaltrock samples is proposed here. This methodology usesRGB or grayscale image of thin section of rock sample

as an input and extracts 27 numerical parameters. A

multilayer perceptron neural network takes as inputthese parameters and provides, as output, the estimated

class of texture of rock. For this purpose, we have use

300 different thin sections and extract 27 parametersfrom each one to train the neural network, which iden-

tifies the texture of input image according to previously

defined classification. To test the methodology, 90 im-

ages (30 in each section) from different thin sections of

different areas are used. This methodology has shown92.22% accuracy to automatically identify the textures

of basaltic rock using digitized image of thin sectionsof 140 rock samples. Therefore, present technique is

further promising in geosciences and can be used to

identify the texture of rock fast and accurate.

Keywords Image processing Multilayer perceptron

neural network Rock texture

RGB or grayscale images

N. Singh (B) A. TiwaryDepartment of Mining Engineering, Institute of Technology,Banaras Hindu University, Varanasi, Indiae-mail: [email protected]

T. N. Singh K. M. SarkarDepartment of Earth Sciences, Indian Instituteof Technology, Bombay, India

1 Introduction

Texture refers to the degree of crystallinity, grain

size, and fabric (geometrical relationships) among the

constituents of a rock. Textural features are probablythe most important aspect of rock because they are

necessary aid in understanding the conditions under

which rock crystallized (e.g., cooling and nucleationrates and order of crystallization) that in turn depend

on initial composition, temperature, pressure, gaseous

contents, and the viscosity of the source rock. A texture

is identified by visual estimation in the field with a handlens (approximately 10) as well as under microscope.

Basaltic rock is chosen for the study.Basalt mainly contains plagioclase, pyroxene, glass,

and groundmass. It shows porphyritic, intergranular, in-

tersertal, ophitic, and subophitic textures [1]. In ophitic

and subophitic textures, plagioclase laths are enclosedby pyroxene or olivine or ferromagnesian minerals.

In porphyritic texture, phenocrysts (large crystals) are

surrounded by the groundmass. The groundmass has

domains of intersertal texture (glass occupies thewedge-shaped interstices between Plag laths), inter-

granular texture (the spaces between plagioclase laths

are occupied by one or more grains of pyroxene), andophitic texture. This paper concerns the most common

textures of basaltic rock mainly porphyritic, intergran-

ular, ophitic textures.An application of soft computing at this stage of

study in geosciences has been discussed by Tagliaferri

et al. [2]. This technique has also been used for tex-

ture identification of carbonate rocks by Marmoa et al.[3]. Singh and Mohan Rao [4] have been using this

approach to classify the ores for ferromanganese met-

allurgical plant feed. Ore texture identification using

-

7/29/2019 Comput Geosci (2010) 14 301310

2/10

302 Comput Geosci (2010) 14:301310

image-processing technique has been used by Petersen

et al. [5], Henley [6], Jones and Horton [7], and Barbery

and Leroux [8].The methodology presented in this paper is useful to

identify most common textures of basalt rock without

much problem. For this purpose, 390 thin sections of

basalt rocks from different areas were prepared in lab-

oratory [9].

2 Presenting image-processing methodology

Image of thin section of basaltic rock is processed us-ing image-processing toolbox of Matlab. In this tool-

box, image is considered as a matrix in which element

is called pixel and its value is called pixel value or in-tensity. RGB image is considered as three-dimensional

matrix, and a grayscale image is considered as two-

dimensional matrix. Each pixel shows its own value,

but it cannot classify texture of image, so we neednumerical parameters that can distinguish the texture

of images. These parameters are extracted from an im-

age using image-processing techniques and expressedas numerical values to differentiate the class of image

from another one.

Neural networks first train the extracted data set

or part of them and classify the textural image. Eachoutput of neural network is the probability to classify

an image texture discussed previously.

The following steps were followed during analysis:

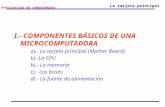

Image acquisition Image dimension reduction Image filtration Feature extraction Feature normalization Input dimension reduction Neural network.

Image-processing technique is applied to acquisition of

images, dimensional reduction, filtration, and feature

extraction, whereas its normalization and input dimen-sion reduction have been done by neural network. A

program is developed, which has very fast identification

under normal computational system.

2.1 Image acquisition

Image acquisition is the process of mapping an image

into digital representation, which is further operated

for feature extraction. This program requires a RGB

image (JPEG type) of thin section of dimension biggerthan 280 391 acquired by a digital camera having 5

magnification.

2.2 Image dimension reduction

In this step, digital image from previous step is reduced

to dimension 280 391 pixels so that the program

can be generalised for thin section image of all sizes(greater than 280 391 pixels size) and magnification.

Reducing the size of an image can introduce artifacts,

such as aliasing, in the output image because informa-tion is always lost when we reduce the size of an im-age. To minimize the artifacts, bicubic interpolation

method is used [10]. Resulting reduced RGB image is

converted into grayscale image having intensity varyingfrom 0 to 255.

2.3 Image filtration

A median filter and a neighbor of 3 3 pixels are used

to remove small distortions without reducing the sharp-

ness of image [3]. It is a nonlinear filter, which replacesthe value of a pixel by the median of the gray levels

in the neighborhood of that pixel (the original value of

the pixel is included in the computation of the median).It provides excellent noise-reduction capabilities, with

considerably less blurring than linear smoothing filters

of similar size [10].

2.4 Feature extraction

In this step, we perform number of operations on image

resulting from the previous steps to measure variousfeatures of image and objects. These features have a

numerical value, which will further be used as inputfor neural network. These features are determined by

performing several tests on images having different

textures (Figs. 1 and 2).

Every feature determines the comparative proper-ties of image and objects. These features are cate-

gorised mainly in three parts:

Statistical features Image features Region features.

Every category has its own parameters. Image fea-

tures determine the various qualities of image (Fig. 3).Region features determine the parameters related to

region selected from image (Fig. 8), and statistical fea-

tures determine the statistical properties of image in-

tensity histogram (Fig. 4). Table 1 shows the parametersconsisting each feature.

Every feature is described in the following sections.

-

7/29/2019 Comput Geosci (2010) 14 301310

3/10

Comput Geosci (2010) 14:301310 303

RGB Image

Image acquisition

Image dimension reduction

Image filtration

Feature extraction

Neural network

Statistical features Image features Region features

Feature normalization

Fig. 1 Flow chart of the program

2.4.1 Statistical features

These features are descriptors of intensity histogram ofimage (Fig. 3) [10]. An image histogram is a plot that

shows the number of gray tones of particular intensity.This plot has 20 equally spaced bins each representing

intensity range.

Mean intensity This parameter measures the averageor means intensity of image. Expression for this para-

meter is

m =

L1

i=0

zi p (zi) (1)

Fig. 2 Porphyritic texture of basalt thin section. Size is 311 506pixels. Plplagioclase, Ololivine, Px pyroxene

where zi is a random variable indicating intensity, p(zi)

is the histogram of the intensity levels, and L is number

of possible intensity levels.

Standard deviation The value of this parameter is

measure of average contrast of image (Fig. 3). It iswritten as

SD = 2 (z) (2)

where 2 is the second moment that is equal to thevariance (2).

Smoothness This parameter measures the relative

smoothness of the intensity in a region.

Smoothness = 1 1

1+ 2

(3)

Fig. 3 A grayscale resized digital image

-

7/29/2019 Comput Geosci (2010) 14 301310

4/10

304 Comput Geosci (2010) 14:301310

Fig. 4 A narrower imagehistogram of porphyritictexture image

0 50 100 150 200 250

0

200

400

600

800

1000

1200

Smoothness is zero for a region of constant intensity,

i.e., intertwining grains (pyroxene granules in angularinterstices between feldspars) in intergranular texture

and phenocrysts in porphyritic texture and 1 for regionswith large excursions in the values of its intensity levels,i.e., ophitic texture.

Third moment This parameter measures the skewness

of a histogram.

3 =

L1

i=0

(zi m)3 p (zi) (4)

Value of this parameter is zero for symmetric his-

togram, positive for histogram skewed to right, and

negative for histogram skewed to left. Value of thisparameter for ophitic texture is positive and for inter-

granular and porphyritic texture is negative.

Uniformity This parameter measures the uniformity

of image. Its value is maximum when all gray levels areequal and decreases from there.

U=

L1

i=0

p2 (zi) (5)

Table 1 Features and their parameters

Statistical features Image features Region features

Mean intensity Percentage of most Area of object

frequent gray tones

Standard deviation Number of edge pixels Extent

in the gray areas

Smoothness Number of perimeter Solidity

pixels

Third moment Number of white areas Convex deficiency

Uniformity Number of pixels of

white areas

Entropy

As in ophitic and subophitic texture plagioclase laths

are enclosed by pyroxene or olivine or ferromagnesianminerals so the textures have very low uniformity. Por-

phyritic texture having only one phenocryst has max-imum uniformity. Intergranular texture has also highuniformity.

Entropy This parameter measures the randomness of

intensity levels in image (Fig. 3).

E =

L1

i=0

p (zi) log2 p (zi) (6)

Ophitic has highest entropy because plagioclase is ran-

domly distributed in the groundmass and surrounded

by pyroxene or olivine, which increases the random-ness. Intergranular and porphyritic texture shows less

randomness.

2.4.2 Image features

These features are descriptors of image (Fig. 3) [3].

Percentage of most frequent gray tone The value of

this parameter is the percentage of gray tones thatappear most frequently in image. This feature can vi-

sualize in image histogram. An image of ophitic texture

has broader histogram because of its randomly distrib-uted plagioclase, so the value of this parameter for

ophitic texture is more than the image of intergranular,

porphyritic texture, which has narrower and higher

histogram.

Number of edge pixels in the gray areas A grayscale

image is operated by a Canny operator, which detect all

weak and strong edges in image. Edge in an image is de-fined as the place where a sharp change in intensity oc-

curs. Canny operator finds edges by determining local

-

7/29/2019 Comput Geosci (2010) 14 301310

5/10

Comput Geosci (2010) 14:301310 305

maxima of the gradient of intensity function. Resulting

image after this operation is a binary image in which

white pixels correspond to edges. The value of thisparameter is the number of white pixels in this binary

image. Binary image of intergranular texture image has

maximum number of edge because it is composed of

large number of crystals, but in others, there is less

number of crystals present in image so they have lessnumber of white pixels (Fig. 5).

Number of perimeter pixels Grayscale image is con-

verted into binary image, which contains pixels of pixelvalue 0 and 1. The value of this parameter is number

of perimeter pixels present in binary image. A pixel is

considered as perimeter pixel if it satisfies both of these

criteria:

(a) The pixel is on.

(b) One (or more) of the pixels in its neighborhood

is off.A pixel is on when its pixel value is 1 and off when

pixel value is zero [10].

On converting the binary image into image havingperimeter pixels, obtained image has white pixels as

perimeter pixels, and black pixels shows the larger crys-

tals or phenocrysts in porphyritic texture and pyroxenegranules and angular feldspar in intergranular texture

(Fig. 6).

Number of white areas The value of this parameter

is the number of objects in binary image, which is

obtained after converting grayscale image into binary.This parameter is determined by converting the binary

image matrix into label matrix of same size in which

objects are labeled. This gives the number of whiteareas (objects) in binary image. Image of intergranular

Fig. 5 Resulting image of Canny operator related to imageshown in Fig. 3

Fig. 6 Perimeter pixels related to image shown in Fig. 3

texture has maximum number of white areas, while

image of ophitic texture has the least number of objects

(Fig. 7).

Number of pixels of white areas The value of this pa-rameter is the total number of white pixels in the binary

image used in the previous parameter. The image of

intergranular texture has maximum number of white

pixels, while image of ophitic texture has least (Fig. 8).

2.4.3 Region features

Four biggest white areas are chosen from binary imageobtained from previous features. The following para-

meters are determined from image having one objectat a time.

Area of object This parameter determines the total

number of white pixels in binary image having one ob-ject (white area). The image of ophitic texture has large

Fig. 7 A label matrix showing the white areas

-

7/29/2019 Comput Geosci (2010) 14 301310

6/10

306 Comput Geosci (2010) 14:301310

Fig. 8 Four biggest white areas in an image of porphyritictexture

size of plagioclase, which corresponds to the bigger areaof object. Image of intergranular texture has smaller

pyroxene granules in between feldspar crystals that give

smaller area of object (Fig. 9).

Convex deficiency Convex deficiency of object is de-

termined as

CD = H S (7)

where H is called convex hull (smallest convex set

containing S) and S is the area of object (determinedin previous section) [10]. The image of ophitic texture

has higher convex deficiency, as the difference between

the area of convex hull and white area is more due

to crystalline and sharp edge structure of plagioclase.

Image of porphyritic texture has rounded phenocrysts,so convex hull will be less and so convex deficiency.

Extent It is equal to the number of pixels in the bound-ing box that are also in the object. Bounding box is the

smallest rectangle containing the object. Value of thisparameter is area of image divided by area of boundingbox [10].

Solidity This parameter corresponds to the proportionof pixels in the convex hull that are also in the object.

It is computed as area of image divided by convex area

[10].

3 Feature normalization

Before using the input data in neural network, it is

often useful to scale the data within a specified range. A

linear transformation is performed to scale the inputsin the range [1 to 1]. This is known as feature nor-

malization. Normalization of inputs prevents singular

features from dominating the others and obtains values

in ranges that are easily comparable. This normalizeddata is used to train the neural network.

4 Input dimension reduction

To reduce the input dimension principal component

analysis (PCA) is used, which is an effective procedure.

Fig. 9 a Biggest white area in porphyritic texture image. b Convex hull of image in a

-

7/29/2019 Comput Geosci (2010) 14 301310

7/10

Comput Geosci (2010) 14:301310 307

f a =f(Wp + b)

P1

P2

P3

PR

Weights

W1, 1

W1, R

Inputs

b (BIAS)

Summingnode

Transferfunction

Output

Fig. 10 Structure of a typical neuron

PCA performs this operation in the following three

steps.

It orthogonalizes the components of the input vec-

tors (so that they are uncorrelated with each other).It then orders the resulting orthogonal components

(principal components) so that those with the largestvariation come first. Finally, it eliminates those com-ponents that contribute the least to the variation in

the data set. In PCA, the largest eigenvectors of the

covariance matrix of the feature set is computed [11].PCA approximates the feature set space by a linear sub-

space by selecting the most expressive features related

to eigenvectors with the largest eigenvalues [3].

In this case, we perform PCA so that finally thedimension of input vector is reduced from 27 to 19.

5 Artificial neural network

Neural networks can be classified as synthetic networks

that emulate the biological neural networks found inliving organisms. They can be defined as physical cellu-

lar networks that are able to acquire, store, and utilize

experiential knowledge related to network capabilities

and performances. They consist of neurons or nodes,which are interconnected through weights (numerical

values represent connection strength). Neurons per-

form as summing and nonlinear mapping junctions.

Network computation is performed by this dense mesh

of computing neurons and interconnections. They oper-ate collectively and simultaneously on most or all data

and inputs [12]. The structure of a typical neuron isillustrated in Fig. 10.

The problem in this case is of pattern classifica-

tion. Use of neural network is most suited in this

case, as there are no available algorithms for solvingthis problem. Moreover, we have considerable input

output data, which can be used for training as well as

testing purpose. The network is trained with input pat-terns that have associated predefined classes assigned

to them. After training, the network should have the

ability to assign any pattern a particular category bycomparing the features of that object with previously

learnt associations. It is a sort of mapping from a fea-

ture space to a class space, using a nonlinear mapping

function [3].

5.1 Network architecture and its properties

A multilayer perceptron neural network (MLP) with

back-propagation training is used in this case to develop

the pattern association model. MLP used here is a four-

layer feed-forward network. A feed-forward networkconsists of nodes arranged in layers. The first layer

is an input layer, the second layer is a hidden layer,

and the last layer is an output layer. Here, we haveused two hidden layers. After applying PCA, 19 input

Fig. 11 The networkarchitecture INPUT LAYER

19HIDDEN LAYER I

15

HIDDEN LAYER II

20

OUTPUT LAYER

3

INTER-GRANULAR

PORPHYRITIC

OOPHITIC

-

7/29/2019 Comput Geosci (2010) 14 301310

8/10

308 Comput Geosci (2010) 14:301310

parameters are left, so there are 19 neurons in the input

layer, while three neurons representing three differ-

ent textures, intergranular, porphyritic and ophitic, areused in the output layer. The number of neurons in the

hidden layers is determined by training the network

with different number of neurons in both the hidden

layers and then measuring the error value for each

network. The network that resulted in minimum meansquare error is used for testing. Here, 15 neurons are

used in the first hidden layer, while 20 neurons are usedin the second hidden layer because this configuration

results in minimum error. The network used is shown

in Fig. 11.

Sigmoid transfer functionlogsig is used in the inputlayer and the first hidden layer, while purelin function

is used in the second hidden layer.

5.2 Development of artificial neural network code

Computer code is developed and implemented in Mat-

lab using the neural network toolbox of Matlab. Gen-eralized delta learning rule for MLP neural networks

is used. Learning is done in supervised mode. In this

mode of learning, input and the corresponding output

is known beforehand, which is then used as a trainingset to make the network learn at an optimum rate. This

mode of learning is very pervasive and is used in many

situations of natural learning.The training algorithm used is called error back-

propagation training algorithm. This algorithm al-

lows experiential acquisition of inputoutput mappingknowledge within multilayer networks. Here, the inputpatterns are submitted sequentially during the training.

Training was performed in the program using trainrp

as the training function, as it results in faster trainingand provides good result. This is a modified form of

basic back-propagation algorithm and is known as re-

silient back-propagation [12].

5.3 Division of dataset

While developing the program early stopping

method is used for generalization of the network. Theentire dataset is divided into two subsetstraining set

and validation set. The training set is used for comput-

ing the gradient and updating weights and biases of thenetwork until training is complete. The error on the

validation set is monitored during the training process.

The validation error and training set error normally

decrease during the initial phase of training. However,when the network begins to over-fit the data, the error

on the validation set begins to rise. When the validation

error increases for a specified number of iterations, the

training is stopped, and the weights and biases at the

minimum of the validation error are returned.

5.4 Training and testing

The feed-forward back-propagation neural networkdiscussed above is used for designing the prediction

model. The available dataset of 300 thin section images

of basalt is used for training and validation purpose.

The dataset is divided into two subsets representing

0 5 10 150

0.5

1

1.5

2

2.5

3

3.5

4

Epoch

Squared

Error

Training

Validation

a

b

Fig. 12 a Graph obtained during training. b Graph obtainedduring training

-

7/29/2019 Comput Geosci (2010) 14 301310

9/10

Comput Geosci (2010) 14:301310 309

training set and validation set. The division is done

keeping, in mind that each subset should be represen-

tative of all possible variations in the data. Training

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

T

0 0.2 0.4 0.6 0.8 1

T

A

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

A

Best Linear Fit: A = (0.957) T + (0.0435)

R = 0.915

Data Points

Best Linear Fit

A = T

Best Linear Fit: A = (1) T + (2.43e-016)

R = 1

Data Points

Best Linear Fit

A = T

0 0.2 0.4 0.6 0.8 1

-0.2

0

0.2

0.4

0.6

0.8

1

1.2

T

A

Best Linear Fit: A = (0.933) T + (-1.62e-016)

R = 0.935

Data Points

Best Linear Fit

A = T

a

b

c

Fig. 13 ac Correlation graphs for three different texturesintergranular, porphyritic, and ophitic respectively

was then performed, which stopped at a mean square

error of 0.0201. Figure 12 represents the graph obtained

during training process. After the training was over,another dataset containing fresh images of 90 samples

is used to test the performance of the network.

6 Analysis of test results

The test set is composed of images that have beenused neither for training nor for validation. This helps

in evaluating the final classification performance of

the MLP. The test set is divided into three sets each

consisting of 30 images. These three sets are then ap-plied individually to evaluate the test performance. The

trained network classified 92.22% images correctly and

only 7.78% images erroneously. Figure 13 shows thecorrelation graphs obtained from the test set for each

of the textures- intergranular (subpanel a), porphyritic(subpanel b) and ophitic (subpanel c). It shows perfectcorrelation for ophitic texture while a slight variation

for the other two textures due to error in classification

of one of the images. Figure 14 represents graphically

the texture classification for one of the test sets. Itclassified 29 images correctly and only one image er-

roneously. The percentage error for this test set was

3.34%. It classified a texture of intergranular as por-phyritic. Both subpanels a and b in Fig. 12 are graphs

representing training progress.

0 5 10 15 20 25 300

0.5

1

1.5

2

2.5

3

3.5

4Comparison of measured and predicted Textures

Sample number

TextureNUmber

1-Inter-g

ranular2-Porphyritic3-Oophitic

Actual Texture

Predicted Texture

Fig. 14 Comparison of predicted and actual textures for a testsample of 30 images. On y-axis, 1 indicates intergranular, 2porphyritic, and 3 ophitic. Here, only one image was classifiederroneously

-

7/29/2019 Comput Geosci (2010) 14 301310

10/10

310 Comput Geosci (2010) 14:301310

Result of validation set

Sample no Intergranular Porphyritic Ophitic

1 1 0 0

2 0 1 0

3 1 0 0

4 1 0 0

5 0 1 0

The texture of the rock sample is INTERGRANULAR(1)The texture of the rock sample is PORPHYRITIC(2)The texture of the rock sample is INTERGRANULAR(1)The texture of the rock sample is PORPHYRITIC(2)The texture of the rock sample is PORPHYRITIC(2)

Images with more complexity have more chancesto be classified erroneously. As thin section images

of basalt contain complex arrangement of crystals, so

there is more probability of error in classification. Inthis paper, results show that 7.78% images are clas-

sified erroneously, which is less compared with theerror found in the identification of carbonate rocks byMarmoa [6].

7 Conclusion

A numerical and computational approach based on

image-processing and multi-layer perceptron neuralnetwork that allows user to classify the texture of basalt

rocks by gray-level images digitized from thin sections

is proposed here. This technique predicts the type oftexture from the digitized images of thin sections with

92.2% accuracy. As out of 30 tested results, only one re-

sult shows disagreement, this indicates usefulness of im-

age analysis and artificial neural network. In the future,larger training and test sets on different basalt rocks

could provide more confident results. Moreover, at

present, there is no standard system for image process-ing of thin sections because there are some difficul-

ties that may be circumvented using higher resolution

and/or magnification during the image acquisition.

Results obtained are encouraging and indicate that a

good choice of features and a specific neural network

can make more accurate automatic textural classifica-tion. Moreover, in the future, it appears possible to ex-

pand the number of textural classes like for intersertal,

subophitic also, so that the tested methodology could

be carried out on all types of rocks.

References

1. Winter, J.D.: An Introduction to Igneous and MetamorphicPetrology. Prentice Hall, Englewood Cliffs (2001)

2. Tagliaferri, R., Longo, G., DArgenio, B., Incoronato, A.:Neural network: analysis of complex scientific data. NeuralNetw. 16(34), 295517 (2003)

3. Marmoa, R., Amodiob, S., Tagliaferrid, C.R., Ferrerib, E.V.,Longo, G.: Textural identification of carbonate rocks by im-age processing and neural network: methodology proposaland examples. Comput. Geosci. 31, 649659 (2005)

4. Singh, V., Mohan Rao, S.: Application of image processingand radial basis neural network techniques forore sorting andore classification. Miner. Eng. 18, 14121420 (2005)

5. Petersen, K., Aldrich, C., Vandeventer, J.S.J.: Analysis of oreparticles based on textural pattern recognition. Miner. Eng.11(10), 959977 (1998)

6. Henley, I.C.J.: Ore-dressing mineralogya review of tech-niques, applications and recent developments. Spec. Publ.Geo. Soc. S. Afr. 7, 175200 (1983)

7. Jones, M., Horton, R.: Recent developments in the stereolog-ical assessment of composite (middlings) particles by linearmeasurements. In: Jones, M.J. (ed.) Proc. XIth Common-wealth Mining and Metallurgical Cong., pp. 113122. IMM,London (1979)

8. Barbery, G., Leroux, D.: Prediction of particle compositiondistribution after fragmentation of heterogeneous minerals;influence of texture. In: Hagni, R.D. (ed.) Process Mineral-ogy VI, pp. 415427. The Metallurgical Society, Warrendale(1986)

9. How to make a thin section? http://almandine.geol.wwu.edu/dave/other/thinsections/

10. Gonzalez, R.C.: Digital Image Analysis Using MATLAB.Prentice-Hall, Upper Saddle River (2004)

11. Bishop, C.M.: Neural Networks for Pattern Recognition.Clarendon, Oxford (2005)

12. Zurada, J.M.: Introduction to Artificial Neural Systems.PWS, Boston (1992)

http://almandine.geol.wwu.edu/~dave/other/thinsections/http://almandine.geol.wwu.edu/~dave/other/thinsections/http://almandine.geol.wwu.edu/~dave/other/thinsections/http://almandine.geol.wwu.edu/~dave/other/thinsections/http://almandine.geol.wwu.edu/~dave/other/thinsections/http://almandine.geol.wwu.edu/~dave/other/thinsections/