8579ch35

-

Upload

gramoz-cubreli -

Category

Documents

-

view

214 -

download

0

Transcript of 8579ch35

-

7/29/2019 8579ch35

1/26

Poularikas A. D. Random Digital Signal Processing

The Handbook of Formulas and Tables for Signal Processing.

Ed. Alexander D. PoularikasBoca Raton: CRC Press LLC,1999

1999 by CRC Press LLC

-

7/29/2019 8579ch35

2/261999 by CRC Press LLC

35Random Digital SignalProcessing35.1 Discrete-Random Processes

35.2 Signal Modeling

35.3 The Levinson Recursion35.4 Lattice Filters

References

35.1 Discrete-Random Processes

35.1.1 Definitions

35.1.1.1 Discrete Stochastic Process

one realization

35.1.1.2 Cumulative Density Function (c.d.f)continuous r.v. on time n (over the ensemble), the value of

35.1.1.3 Probability Density Function (p.d.f.)

35.1.1.4 Bivariate c.d.f.

35.1.1.5 Joint p.d.f.

Note: For multivariate forms extend (35.1.1.4) and (35.1.1.5).

35.1.2 Averages (expectation)

35.1.2.1 Average (mean value)

relative frequency interpretation

{ ( ), ( ), , ( )}x x x n1 2 L

F x n P X n x n X nx

( ( )) { ( ) ( )}, ( )= = x n( ) =X n n( ) at

f x nF x n

x nx

x( ( ))( ( ))

( )=

F x n x n P X n x n X n x nx

( ( ), ( )) { ( ) ( ), ( ) ( )}1 2 1 1 2 2

=

f x n x nF x n x n

x n x nx

x( ( ), ( ))( ( ), ( ))

( ) ( ).

1 2

2

1 2

1 2

=

( ) { ( )} ( ) ( ( )) ( ), ( ) lim ( )n E x n x n f x n dx n n N x nx Ni

N

i= = =

=

=

1

1

-

7/29/2019 8579ch35

3/261999 by CRC Press LLC

35.1.2.2 Correlation

complex r.v.

relative frequency interpretation

autocorrelation

35.1.2.3 Covariance-Variance

Example 1Given with r.v. uniformly distributed between Then

which implies that the process is at least a wide-sense stationary process.

35.1.2.4 Independent r.v.

,

uncorrelated. Independent implies uncorrelated, but the reverse is not always true.

35.1.2.5 Orthogonal r.v.

35.1.3 Stationary Processes

35.1.3.1 Strict Stationary

for any set for any k andany n0.

35.1.3.2 Wide-sense Stationary (or weak)

constant for any n,

R R( , ) { ( ) ( )} ., ( , ) { ( ) ( )}m n E x m x n m n E x m x n= = = =real r.v

R( , ) ( ) ( ) ( ( ), ( )) ( ) ( )m n x m x n f x n x m dx m dx n=

R( , ) lim ( ) ( )m nN

x m x nN

i

N

i i=

=

=

11

R( , ) { ( ) ( )}n n E x n x n= =

C( , ) {[ ( ) ( )][ ( ) ( )] } { ( ) ( )} ( ) ( )m n E x m m x n n E x m x n m n= =

C( , ) [ ( ) ( )][ ( ) ( )] ( ) ( )m n x m m x n n dx m dx n=

C( , ) ( ) { ( ) ( ) }n n n E x n n= = = 2 2 variance

R C( , ) ( , ) ( ) ( )m n m n m n= = =if 0

x n Aej n

( )( )= + 0 and .

E x n E A j n R k E x k x E Ae A e

A E e A e R k

x

j k j

j k j k

x

{ ( )} { exp( ( ))} , ( , ) { ( ), ( )} { }

{ } ( )

( ) ( )

( ) ( )

= + = = =

= = =

+ +

0

2 2

0 0 0

0 0

l l

l

l

l l

f x m x n f x m f x n E f x m x n E f x m E f x n( ( ), ( )) ( ( )) ( ( )), { ( ( ), ( ))} { ( ( ))} { ( ( ))}= = =p.d.f.

C( , )m n = =0

E x m x n{ ( ) ( )} = 0

F x n x n x n F x n n x n n x n nk k( ( ), ( ), , ( )) ( ( ), ( ), , ( ))1 2 1 0 2 0 0L L

= + + + { , , , }n n nk1 2L

x x

n( ) = =R R

x x xk k C( , ) ( ), ( )l l= = < 0 variance

-

7/29/2019 8579ch35

4/261999 by CRC Press LLC

35.1.3.3 Correlation Properties

1. Symmetry:

2. Mean Square Value:

3. Maximum Value: ,

4. Periodicity: If for some then is periodic

35.1.3.4 Autocorrelation Matrix

TheHsuperscript indicates conjugate transpose quantity (Hermitian)

Properties:

1.

2. Toeplitz matrix

3. is non-negative definite

4.

35.1.3.5 Autocovariance Matrix

35.1.3.6 Ergotic in the Mean

a.

b.

c.

35.1.3.7 Autocorrelation Ergotic

35.1.4 Special Random Signals

35.1.4.1 Independent Identically Distributed

If for a zero-mean, stationary random signal, it is said that the

elements are independent identically distributed (iid).

35.1.4.2 White Noise (sequence)

If delta function, variance of white noise,

the sequence is white noise.

r k r k r k r k x x x

( ) ( ) ( ( ) ( ) )= = for real process

r E x nx

( ) { ( ) }0 02=

r r kx x

( ) ( )0

r k rx x

( ) ( )0

0= k0

r kx

( )

R xx xx

H

x x x

x x x

x x x

TE

r r r p

r r r p

r p r p r

x x x p= =

={ }

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

, [ ( ), ( ), , ( )]

0 1

1 0 1

1 0

0 1

L

L

M

L

L

R RHx xr k r k = = or ( ) ( )

R =

x Rx RH 0,

E x p x p xB BH T B T{ } , [ ( ), ( ), , ( )]x x R x= = 1 0L

C x x R

x x x

H

x x x x x x x

TE= = = {( )( ) ] , [ , , , ] L

lim ( ) , ( ) ( ), ( ) { ( )}N

x x x

n

N

xN n

Nx n n E x n

=

= = = 11

lim ( ) ,N

k

N

xN

c k

= =1 0

1

lim ( )k xc k = 0

lim ( )N

k

N

xN

c k

= =1 0

1

2

f x x f x f x( ( ), ( ), ) ( ( )), ( ( )),0 1 0 1L L=

x n( )

r n m E v n v m n m n mv

( ) { ( ) ( )} ( ), ( ) = = = 2 v

2 =

-

7/29/2019 8579ch35

5/261999 by CRC Press LLC

35.1.4.3 Correlation Matrix for White Noise

35.1.4.4 First-Order Marrkov Signal

35.1.4.5 Gaussian

1.

2.

C = covariance matrix for elements

ofx

3. correlation matrix,

4. is zero-mean Gaussian iid (Gaussian white noise), then

5. Linear operation on a Gaussian signal produces a Gaussian signal.

35.1.5 Complex Random Signals

35.1.5.1 Complex Random Signal

35.1.5.2 Expectation

35.1.5.3 Second Moment

35.1.5.4 Variance

35.1.5.5 Expectation of Two r.v.

the real vector has a joint p.d.f.

35.1.5.6 Covariance of Two r.v.

Note: If is independent of (equivalently then

35.1.5.7 Expectation of Vectors

then

R I= v

2

f x n x n x n x f x n x n( ( ) ( ), ( ), , ( )) ( ( ) ( )) = 1 2 0 1L

f x i x i ii i

( ( )) exp ( ( ) ( ))=

1

2

1

2 22

f x n x n x n fL L

T( ( ), ( ), , ( )) ( )( )

exp[ ( ) ( )]/ /0 1 1 2 1 2

12

11

2L

= = xC

x C x

x = = [ ( ), ( ), , ( )] , [ ( ), ( ), , ( )],x n x n x n n n nL

T

L0 1 1 0 1 1L L

fL

T( )( )

exp[ ],/ /

xR

x R x R= =12 2

1 212

1

E{ }x 0=

Ifx n( )

R I R x

=

= = =

1 2 2 2 2 21 12 120

1

v

v

L

L

v

Lv i n

n

f x i

L

, , ( )( )

exp ( )/

, ., x u jv u v x= + =and real r.v complex r.v

E x E u j E v{} { } { }= +

E x E xx E u E v{ } { } { } { }2 2 2= = +

var( ) { {} } { } {}x E x E x E x E x= = 2 2 2

E x x E u u E v v j E u v E u v{ } { } { } ( { } { }),1 2 1 2 1 2 1 2 2 1

= + + [ ]u u T1 2 1 2

cov( , ) { } { } { }.x x E x x E x E x1 2 1 2 1 2

=

x1

x2

[ ] [ ]u uT T1 1 2 2

is independent ofcov( , )x x

1 20=

If [ ] ,x = x x x

n

T

1 2L

E E x E x E x

n

T{} [ { } { } { }]x =1 2

L

-

7/29/2019 8579ch35

6/261999 by CRC Press LLC

35.1.5.8 Covariance of

H = means complex conjugate of a matrix.

Note: is positive semi-definite.

Example

35.1.5.9 Complex Gaussian

If we write then complex

Gaussian

35.1.5.10 Complex Gaussian Vector

each component of is the real random vectors

are independent,

multivariate complex Gaussian

p.d.f.

Properties

1. Any subvector of is complex Gaussian

2. If s of are uncorrelated, they are also independent and vice versa

3. Linear transformations again produce complex Gaussian4. If then

x

C x x x x {( {})( {}) }

var( ) cov( , ) cov( , )

cov( , ) var( ) cov( , )

cov( , ) cov( , ) var( )

x

H

n

n

n n n

E E E

x x x x x

x x x x x

x x x x x

= =

1 1 2 1

2 1 2 2

1 2

L

L

M

L

C C C x

H

x x= and

, {} {} , , { } { } ,y Ax b y A x b A A= + = + = + = +

= =

E E y x b E y E x bij

n

ij j i i

j

n

ij j i

1 1

C y y y y A x x x x

{( {})( {}) } { ( {})( {}) },y

H H HE E E E E E A= =

C A x x x x A

[( {})( {}) ] [ ]y

ijij

n

k

n

H

k

H

jE E E[ ] =

==l

l l

11

, , ( , / ), ( , / ),x u jv u v u N v Nu v

= + = =and independent 2 22 2

f u v u vu v

( , ) exp[ (( ) ( ) )].= + 1 1

2 2

2 2

{} , = = +E x ju v

f x x x CN ( ) ( / )exp[ / ], ( , )= =1 22 2 2

[ ] ,x = x x x

n

T

1 2L x CN

i i( , ), 2 [ ] , [ ] ,u v u vT T

1 1 2 2

L,[ ]u v

n n

T

f f x xi

i

n

n

i

i

n

ii

n

i i( ) ( ) exp x = =

= = =

1

2

1

2

1

21

1

[ / [ det( )]]exp[ ( ) ( )], ( , , , )

1 1

1

2

2

2 2 nx

H

x x ndiagC x C x C = = L

CN

x( , )

C

x

xi

x

[ ] ( , ),

x 0 C= x x x x CNTx1 2 3 4

E x x x x E x x E x x E x x E x x{ } { } { } { } { }1 2 3 4 1 2 3 4 1 4 2 3

= +

-

7/29/2019 8579ch35

7/261999 by CRC Press LLC

35.1.6 Complex Wide Sense Stationary Random Processes

35.1.6.1 Mean

35.1.6.2 Autocorrelation

35.1.7 Derivatives, Gradients, and Optimization

35.1.7.1 Complex Derivative

J = scalar function with respect to a complex parameter

Note:

Example

35.1.7.2 Chain Rule

and hence

35.1.7.3 Complex Conjugate Derivative

Note: Setting will produce the same solutions as

35.1.7.4 Complex Vector Parameter

,

each element is given by (35.1.7.1).

E x n E u n jv n E u n jE v n{( )} { ( ) ( )} { ( )} { ( )}= + = +

r k E x n x n k E u n jv n u n k jv n k

r k jr k jr k r k r k jr k

xx

u uv vu v u uv

( ) { ( ) ( )} {( ( ) ( )) ( ( ) ( ))}

( ) ( ) ( ) ( ) ( ) ( )

= + = + + +

= + + = +

2 2

J Jj

J=

1

2

, = + j .

J J J= = =0 0if and only if

JJ J

jJ

j= = + =

= =

2 2 21

2

1

22 2, ( )

Jj j

j j

( , ), ( )

( ) , ,

= + =

+

= + + = =

1

2

1

21 0 0 1 1 0

J( , ) = + =1 0

J J

j

Jj

J J

=+

=

=

( ( )) ( )

1

2

J/ = 0 J/ = 0

J J J J

p

T

=

1 2

L

-

7/29/2019 8579ch35

8/261999 by CRC Press LLC

Note: if each element is zero and hence if and only if for all

35.1.7.5 Hermitian Forms

1. (see 35.1.7.2) and hence

2.

3.

35.1.8 Power Spectrum Wide-Sense Stationary Processes (WSS)

35.1.8.1 Power Spectrum (power spectral density)

Properties

1. real-valued function

2. non-negative,

3.

Example

35.1.9 Filtering Wide-Sense Stationary (WSS) Processes and SpectralFactorization

35.1.9.1 Output Autocorrelation

autocorrelation of a linear time invariant system, h(k) = impulse

response of at the system, autocorrelation of the WSS input processx(n).

35.1.9.2 Output Power

Z-transforms,

J/ = 0 J Ji i/ /= = 0

i p= 1 2, , , .L

l l( ) ,

= = = ==

bH

i

p

i i

k

kk

k

kb b b

1

b b

H

=

Also,

Hb0=

J H H= = = A A Areal ( ),

JJ J

j

p

i

p

i ij j

k j

p

i

p

i ij ik

i

p

i i k

i

p

ki

T

i

T= = = = = ===

==

=

=

11 11 1 1

A A A A A A, , ( )

S e r k e r k E x n x n k x n r k S e e d x

j

k

x

jk

x x

j jk( ) ( ) , ( ) { ( ) ( )}, ( ) ( ) ( )

= = =

=

WSS, 12

S exj

( )

S e

x

j( ) 0

r E x n S e d x

j( ) { ( ) } ( )01

2

2= =

r k a a S e r k e a e a e

ae ae

a

a a

x

k

x

j

k

x

jk

k

k jk

k

k jk

j j

( ) , , ( ) ( )

cos

= < = = +

=

+

= +

=

=

=

1 1

1

1

1

11

1

1 2

0 0

2

2

r k r k h k h k r k y x y

( ) ( ) ( ) ( ), ( )= =

r kx

( ) =

S e S e H e S z S z H z H zy

j

x

j j

y x( ) ( ) ( ) , ( ) ( ) ( ) ( / ) = =

2

1

-

7/29/2019 8579ch35

9/261999 by CRC Press LLC

if

Note: IfH(z) has a zero at will have a zero at and another at

35.1.9.3 Spectral Factorization

Z-transform of a WSS processx(n),

variance of whiter noise.

35.1.9.4 Rational Function

Note: since is real. This implies that for each pole (or zero) in there

will be a matching pole (or zero) at the conjugate reciprocal location.

35.1.9.5 Wold Decomposition

Any random process can be written in the form. regular random process,

predictable process, are orthogonal

Note: continuous spectrum + line spectrum

35.1.10 Special Types of Random Processes (x(n) WSS process)

35.1.10.1 Autoregressive Moving Average (ARMA)

,

variance of input white noise,H(z) = causal linear time-invariant filter = output

powers spectrum

35.1.10.2 Power Spectrum of ARMA Process

35.1.10.3 Yule-Walker Equations for ARMA Process

matrix form:

h n S z S z H z H zy x

( ) ( ) ( ) ( ) ( / ).is real then = 1

z z S zy

=0

then ( ) z z=0

z z= 10

/ .

S z Q z Q z S zx v x

( ) ( ) ( / ), ( )= = 2 1

v x

jS e d21

2=

exp ln ( ) ,

v

2 =

S zB z

A z

B z

A zx v

( )( )

( )

( / )

( / ),=

2 1

1

B z b z b q z A z a z a p zq p( ) ( ) ( ) , ( ) ( ) ( ) .= + + + = + + + 1 1 1 11 1L L

S z S zx x

( ) ( / )= 1 S ex

j( ) S zx

( )

x n x n x n x np r r

( ) ( ) ( ), ( )= + =x n

p( ) = x n x n

p r( ) ( ),and E x m x n

r p{ ( ) ( )} . = 0

S e S e ax

j

x

j

k

k

N

kr( ) ( ) ( ) = +

=

1

S zB z B z

A z A zx v

q q

p p

( )( ) ( / )

( ) ( / )=

2

1

1

v

2 = =B z

A z

q

p

( )

( ), S z

x( )

( )z ej=

S eB z B z

A z A z

B e

A ex

j

v

q q

p p

v

q

j

p

j( )

( ) ( / )

( ) ( / )

( )

( )

= =

2 2

2

2

1

1

c k b h k b k hq

k

q

q

q k

q( ) ( ) ( ) ( ) ( ),= = +

=

=

l l

l l l l

0

r k a r k c k k q

k qx

p

p x

v q( ) ( ) ( )( )

,+ =

>=

l

l l

1

2 0

0

-

7/29/2019 8579ch35

10/261999 by CRC Press LLC

35.1.10.4 Extrapolation of Correlation

35.1.10.5 Autoregressive Process (AR)

output of the filter

35.1.10.6 Power Spectrum of AR Process

35.1.10.7 Yule-Walker Equation for AR Process

Set q = 0 in (35.1.10.3) and with the equations are:

In matrix form:

linear in the coefficient

35.1.10.8 Moving Average Process (MA)

output of the filter

r r r p

r r r p

r q r q r q p

r q r q r q p

r q p r q p r

x x x

x x x

x x x

x x x

x x x

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) (

0 1

1 0 1

1

1 1

1

+

+ +

+ +

L

L

M M M

L

L

M M

L qq

a

a

a p

c

c

c qp

p

p

v

q

q

q

)

( )

( )

( )

( )

( )

( )

___

=

1

1

2

0

1

0

0

0

2

M

M

M

r k a r k k p r r px

p

p x x x( ) ( ) ( ) ; ( ), , ( )= =l l l L1 0 1for given

S zb

A z A zx v

p p

( )( )

( ) ( / )= =

2

20

1

H zb

a k z

b

A z

k

p

p

k

( )( )

( )

( )

( )=

+

=

=

0

1

0

1

S eb

A ex

j

v

p

j( )

( )

( )

= 2

2

2

0

c b h bo( ) ( ) ( ) ( )0 0 0 0

2= =

r k a r k b k k x

p

p x v( ) ( ) ( ) ( ) ( ), .+ =

=l l l12 20 0

r r r p

r r r p

r p r p r

a

a p

b

x x x

x x x

x x x

p

p

v

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( )

( )

( )

0 1

1 0 1

1 0

1

10

1

0

0

2 2

+

=

K

L

M

L

M M

a kp

( )

S z B z B zx v q q

( ) ( ) ( / )= = 2 1 H z b k zk

q

q

k( ) ( )==

0

-

7/29/2019 8579ch35

11/261999 by CRC Press LLC

35.1.10.9 Power Spectrum of MA Process

35.1.10.10 Yule-Walker Equation of MA Process

From (35.1.10.3) with and noting that then

MA(q) process is zero for koutside

35.2 Signal Modeling

35.2.1 The Pade Approximation

35.2.1.1 Pade Approximation

and This means

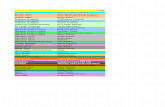

that the data fit exactly to the model over the range SeeFigure (35.1) for the model. The

system function is (see Section 35.1.10)

35.2.1.2 Matrix Form of Pade Approximation

Note: Solve for ap(p)s first (the lower part of the matrix) and then solve for bq(q)s.

35.2.1.3 Denominator Coefficients (ap(p))

From the lower part of 35.2.1.2 we find

FIGURE 35.1 x(n) modeled as unit sample response of linear shift-invariant system with p poles and q zeros.

S e B ex

j

v q

j( ) ( ) = 22

a kp

( ) = 0 h n b nq

( ) ( ),=

c k b k b r k b k b k b k bq

q k

q q x v q q v

q k

q q( ) ( ) ( ), ( ) ( ) ( ) ( ) ( ),= + = = +

=

=

l l

l l l l

0

2 2

0

[ , ].q q

x n a k x n kb n n q

n q q pk

p

p

q( ) ( ) ( )

( ) , , ,

, ,+ =

== + +

=

1

0 1

0 1

L

L

h n x n n p q h n n( ) ( ) , , , , ( )= = + = 0 0for and

[ , ].0 p q+H z B z A z

q p( ) ( ) / ( ).=

h(n) e(n)+

+-(n)

x(n)

Ap(z)

Bq(z)

H(z) =

xx x

x x

x q x q x q p

x q x q x q p

x q p x q p x q

( )( ) ( )

( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

0 0 01 0 0

2 1 0

1

1 1

1

L

L

L

M

L

L

M

L

+ +

+ +

=

1

1

2

0

1

0

0

a

a

a p

b

b

b qp

p

p

q

q

q

( )

( )

( )

( )

( )

( )

M

M

M

-

7/29/2019 8579ch35

12/261999 by CRC Press LLC

or equivalently nonsymmetric Toeplitz matrix withx(q) element in the upper left

corner, xq+1 = vector with its first element beingx(q + 1).

Note:

1. If Xq is nonsingular, then exists and there is unique solution for the ap(p)s:

2. If Xq is singular and (35.2.1.3) has a solution then Xqz = 0 has a solution and, hence, there

is a solution of the form

3. If Xq is nonsingular and no solution exists, we must set ap(0) = 0 and solve the equation Xqap = 0.

35.2.1.4 All Pole Model

(35.2.1.3) becomes

or or

Example 1

find the Pade approximation of all-pole and second-order model (p =

2, q = 0). From (35.2.1.4) and and a(2) =

1.5. Also and since we obtainapproximate =

Note: Matches to the second order only.

35.2.2 Pronys Method

35.2.2.1 Pronys Signal Modeling

Figure 35.2 shows the system representation.

FIGURE 35.2 System interpretation of Pronys; method for signal modeling.

x q x q x q p

x q x q x q p

x q p x q p x q

a

a

a p

x q

x q

p

p

p

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( )

( )

( )

( )

( )

++ +

+ +

=

++

1 1

1 2

1 2

1

2

1

2

L

L

M

L

M M

xx q p( )+

X a x Xq p q q

= =+1,

Xq

1 a X xp q q

= +1

1.

ap

.a a zp p

= +

H z b a k z q

k

p

p

k( ) ( ) / ( ) ,= +

==

0 1 01

with

x

x x

x p x p x

a

a

a p

x

x

x p

p

p

p

( )

( ) ( )

( ) ( ) ( )

( )

( )

( )

( )

( )

( )

0 0 0

1 0 0

1 2 0

1

2

1

2

L

L

M

L

M M

=

X a x0 1p

=

a kx

x k a x kp

k

p( )

( )( ) ( ) ( )= +

=

101

1

l

l l

x = [ . . . . . ]1 1 5 0 75 0 21 0 18 0 05 T

a a( ) ( ) .1 0 2 1 5+ = 1 5 1 2 0 75 1 1 5. ( ) ( ) . ( ) .a a a+ = = or

b x H z z z( ) ( ) , ( ) /[ . . ]0 0 1 1 1 1 5 1 51 2

= = = +

and hence h n x n( ) ( )=x = [ , . . . . . ] .1 1 5 0 75 1 125 2 8125 2 5312 T

-

7/29/2019 8579ch35

13/261999 by CRC Press LLC

35.2.2.2 Normal Equations

.

Note: The infinity indicates a nonfinite data sequence.

35.2.2.3 Numerator

35.2.2.4 Minimum Square Error

Example 1

Let otherwise. Find Pronys method to model as the

unit sample response of linear time-important filter with one pole and one zero,

Solutions

From (35.2.2.2) and

Hence and the denominator becomes

=

Numerator Coefficients

The minimum square error is +

B u t a nd F o r

comparison error =

which gives

35.2.3 Shanks Method

35.2.3.1 Shanks Signal Modeling

Figure 35.3 shows the system representation.

l

l l L l l l

= = +

= = =

1 1

0 1 2 0

p

p x x x

n q

a r k r k k p r k x n x n k k ( ) ( , ) ( , ) , , , ; ( , ) ( ) ( ) ,

b n x n a k x n k n qq

k

p

p( ) ( ) ( ) ( ) , , ,= + =

=

1

0 1L

p q x

k

p

p xr a k r k

,( , ) ( ) ( , )= +

=

0 0 0

1

x n n N x n( ) , , , ( )= = =1 0 1 1 0for andL x n( )

H z b b z a z( ) ( ( ) ( ) ) / ( ( ) )= + + 0 1 1 11 1

p a r r r x n N r x nx x xn

xn= = = = ==

=

1 1 1 1 1 0 1 1 1 1 1 022

2, ( ) ( , ) ( , ), ( , ) ( ) , ( , ) ( )

x n N( ) . = 1 2 a r r N N x x

( ) ( , ) / ( , ) ( ) /( )1 1 0 1 1 2 1= = A z( )

12

1

1

N

Nz .

b x b x a xN

N N( ) ( ) , ( ) ( ) ( ) ( ) .0 0 1 1 1 1 0 1

2

1

1

1= = = + =

=

110 0

,( , )= r

x

a rx

( ) ( , ).1 0 1 r x n N x

n

( , ) ( )0 0 2

2

2

= = =

11

2 1,

( ) /( ).

= N N N H z

= =21, ( )

z+ 1 0 05 1( . ) /( . ) ( ) ( ) ( . ) ( ), . ,,

1 0 95 0 95 1 0 951 111

= + = z h n n u nnand =e

x n h n( ) ( )n

e n

=

0

2 4 595[ ( )] . .

-

7/29/2019 8579ch35

14/261999 by CRC Press LLC

35.2.3.2 Numerator of H(z)

35.2.4 All-Pole Model-Pronys Method

35.2.4.1 Transfer Function

35.2.4.2 Normal Equations

35.2.4.3 Numerator

minimum error (see 35.2.4.4)

35.2.4.4 Minimum Error

35.2.5 Finite Data Record Prony All-Pole Model

35.2.5.1 Normal Equations

FIGURE 35.3 Shanks method. The denominatorAp(z) is found using Pronys method,Bq(z) is found by minimizing

the sum of the squares of the error e(n).

(n) 1A

p(z)

g(n) x(n)

x(n)

e(n)+Bq(z)

-

l

l l L l l

= =

= = =

0 0

0 1

q

q g xg g

n

b r k r k k q r k g n g n k ( ) ( ) ( ) , , , ; ( ) ( ) ( ),

r k x n g n k g n n a k g n k xg

n k

p

p( ) ( ) ( ), ( ) ( ) ( ) ( )= =

=

= 0 1

H z b a k z

k

p

p

k( ) ( ) / ( )= +

=

0 11

l

l l L

=

=

= = = =

0 0 0for and

-

7/29/2019 8579ch35

15/261999 by CRC Press LLC

35.2.5.2 Minimum Error

35.2.6 Finite Data Record-Covariance Method of All-Pole Model

35.2.6.1 Normal Equation

35.2.6.2 Minimum Error

Example

use first-order model: and 35.2.6.1 becomes

For k = 1, 35.2.6.1 becomes and must find and

From 35.2.6.1 also =

But and with b(0) = 1 so that

the model is

35.3 The Levinson Recursion

35.3.1 The LevinsonDurbin Recursion

35.3.1.1 All-Pole Modeling

p x

k

p

p xr a k r k = +

=

( ) ( ) ( )01

l

l l L

l l l

=

=

= =

= = < >

1

0 1 2

0 0 0

p

p x x

x

n p

N

a r k r k k p

r k x n x n k k x n n n N

( ) ( , ) ( , ) , , , ,

( , ) ( ) ( ), , , ( ) .for and

[ ] ( ) ( ) ( , )min

p x

k

p

p xr a k r k = +

=0 0

1

x = [ ] ,1 2 L N T H z b a z p( ) ( ) / ( ( ) ),= + =0 1 1 11 then

l

l l

=

= 1

1

1 0a r r k x x

( ) ( , ) ( , ). a r rx x

( ) ( , ) ( , )1 1 1 1 0= rx

( , )1 1

rx

( , ).1 0 r x n x n x nx

n

N

n

N

N( , ) ( ) ( ) ( ) [ ]/[ ],1 1 1 1 1 1

1 0

1

2 2 2= = = =

=

rx ( , )1 0

n

N

Nx n x n

=

= 1

2 21 1 1( ) ( ) [ ]/[ ]. ( ) ( , ) / ( , )1 1 0 1 1= = r r

x x

x x( ) ( )0 0= H z z( ) /[ ].= 1 1 1

r k a r k k p r a r x

p

p x p x

p

p x( ) ( ) ( ) , , , , ( ) ( ) ( ),+ = = = +

= =

l l

l l L l l

1 1

0 1 2 0

r k x n x n k k x n n n N x

n

N

( ) ( ) ( ) , ( ) .= = < >=

0

0 0 0for and

-

7/29/2019 8579ch35

16/261999 by CRC Press LLC

35.3.1.2 All-Pole Matrix Format

or symmetric Toeplitz.

35.3.1.3 Solution of (35.3.1.2)

1. Initialization of the recursion

a)

b)

2. For

a)

b) reflection of coefficient;

c)

d)

e)

3. (see 35.3.1.1)

35.3.1.4 Properties

1. js produced by solving the autocorrelation normal equations (see Section 35.2.4) obey the

relation

2. If for all j, then minimum phase polynomial (all roots lie

inside the unit circle)

3. If ap is the solution to the Toeplitz normal equation (see 35.3.1.2) and

(positive definite) then minimum phase

4. If we choose (energy matching constraint), then the auto-correlation sequences of

x(n) and h(n) are equal for

r r r r p

r r r r p

r r r r p

r p r p r p r

ax x x x

x x x x

x x x x

x x x x

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

0 1 2

1 0 1 1

2 1 0 2

1 2 0

1

L

L

L

M

L

pp

p

p

pa

a p

( )

( )

( )

1

2

1

0

0

0

M M

=

R a u u R

p p p

T

p= = =

1 11 0 0, [ , , , ] ,L

a rx0 0

0 1 0( ) , ( )= =

a0 0 1( ) ,=

00= r

x( )

j p= 0 1 1, , ,L

j x

i

j

j xr j a i r j i= + + +

=( ) ( ) ( );1 1

1

j j j+ = =1 /

For i j a i a i a j i

j j j j= = + ++ +

1 2 11 1

, , , ( ) ( ) ( );L

a jj j+ ++ =1 11( ) ;

j j j+ += 1 1

2

1[ ]

bp

( )0 =

j

1

j

< 1 A z a k zp

k

p

p

k( ) ( )= + =

11

R a up p p

= 1

Rp

> 0

A zp

( ) =

bp

( )0 =

k p .

-

7/29/2019 8579ch35

17/261999 by CRC Press LLC

35.3.2 Step-Up and Step-Down Recursions

35.3.2.1 Step-Up Recursion

The recursion finds ap(i)s from js.

Steps

1. Initialize the recursion:

2. For

a) For

b)

3.

35.3.2.2 Step-Down Recursion

The recursion finds js from ap(i)s.

Steps

1. Set

2. For

a) For

b) Set

c) If

3.

Example

To implement the third-order filter in the form of a lattice structure

we proceed as follows. From step 2) we obtain the second-

order polynomial or

2 = Next we find and hence 1 = and

= The lattice filter implementation is shown inFigure 35.4.

FIGURE 35.4

a0

0 1( ) =

j p= 0 1 1, , ,L

i j a i a i a j i

j j j j= = + ++ +

1 2 11 1

, , , ( ) ( ) ( )L

a jj j+ ++ =1 11( )

bp

( )0 =

p p

a p= ( )

j p p= 1 2 1, , ,L

i j= 1 2, , ,L a i a i a j ijj

j j j( ) [ ( ) ( )]=

+

+

+ + +1

11

1

2 1 1 1

j j

a j= ( )

j = 1 quit

p

b= 2 0( )

H z z z z( ) . . .= + 1 0 5 0 1 0 51 2 3

a3 3 3

1 0 5 0 1 0 5 3 0 5= = = [ , . , . , . ] , ( ) . .T aa

2 2 21 1 2= [ ( ) ( )]a a T

a

a

a

a

a

a

2

2 32

3

33

3

3

1

2

1

1

1

2

2

1

1

1 0 25

0 5

0 10 5

0 1

0 5

0 6

0 2

( )

( )

( )

( )

( )

( ) .

.

..

.

.

.

.

=

=

+

=

,

a2

2 0 2( ) . .= a a a1

2

2 2 2 21

1

11 1 0 5( ) [ ( ) ( )] .=

=

a

11 0 5( ) .=

[ . . . ] .0 5 0 2 0 5 T

y(n)

x(n)-0.5 =

3-0.5 =

3

0.2 = 2

0.2 = 2

0.5 = 1

0.5 = 1

z-1z-1

-

7/29/2019 8579ch35

18/261999 by CRC Press LLC

35.3.3 Cholesky Decomposition

35.3.3.1 Cholesky Decomposition

Hermitian Toeplitz autocorrelation matrix,

lower triangular,

35.3.4 Inversion of Toeplitz Matrix35.3.4.1 Inversion of Toeplitz Matrix

nonsingular Hermitian matrix (see 35.3.1.2), Ap = see 35.3.3.1, Dp = see

35.3.3.1, b = arbitrary vector.

35.3.5 Levinson Recursion for Inverting Toeplitz Matrix

35.3.5.1 Levinson Recursion

Rpx = b, Rp = see 35.3.1.2 (known), b = arbitrary vector, x = unknown vector

Recursion:

1. Initialize the recursion

a)

b)

c)

2. For

a)

b)

c) ;

d)

e)

f)

g)

h)

i)

R L D Lp p p p

H= =

A L Ap

p

p

p p p

H

a a a p

a a p

a p=

= =

1 1 2

0 1 1 1

0 0 1 2

0 0 0 1

1 2

2

( ) ( ) ( )

( ) ( )

( ) ,

L

L

L

M M M M

L

D D R R A R A D

p p p p p p

H

p p pdiag= = = ={ }, det det , . . . ,

0 135 3 1 2L see

R x b R A D Ap p p p p

H= = = , 1 1

a0

0 1( ) =

x b rx0

0 0 0( ) ( ) / ( )=

0

0= rx

( )

j p= 0 1 1, , ,L

j x

i

j

j xr j a i r j i= + + +

=

( ) ( ) ( )1 11

j j j+ = 1 /

For i j a i a i a j i

j j j j= = + ++ +

1 2 11 1

, , , ( ) ( ) ( )L

a jj j+ ++ =1 11( )

j j j+ += 1 1

2

1[ ]

j

i

j

j xx i r j i= +

=

1

1( ) ( )

q b jj j j+ += + 1 11[ ( ) ]/

For i j x i x i q a j i

j j j j= = + ++ + +

0 1 11 1 1

, , , ( ) ( ) ( )L

x j qj j+ ++ =1 11( )

-

7/29/2019 8579ch35

19/261999 by CRC Press LLC

Example

1. Initialization:

2. For =

and hence

3. For j =1

+

35.4 Lattice Filters

35.4.1 The FIR Lattice Filter

35.4.1.1 Forward Prediction Error

forward prediction error, data, all-pole filter coefficients.

35.4.1.2 Square of Error

35.4.1.3 Z-Transform of the pth-Order Error

forward prediction error filter (all pole), see (35.4.1.1).

Note: The output of the forward prediction filter is when the input isx(n).

35.4.1.4 (j+1) Order Coefficient

see Section 35.3.1

8 4 2

4 8 4

2 4 8

0

1

2

18

12

24

=

x

x

x

( )

( )

( )

0 0

0 8 0 0 0 18 8 9 4= = = = =r x b r ( ) , ( ) ( ) / ( ) / /

j r= = = = = = =

=

0 1 4 4 8 1 2

1 1

1 20 1 0 0 1 1, ( ) , / / / ,

/,

a

1 0 1

21= [ ]

8 1 1 4 6 0 1 9 4 4 9 1 12 9 6 1 20 0 1 0 1

[ / ] , ( ) ( ) ( / ) , [ ( ) ] / ( ) / / , = = = = = = = x r q b

x1

0

1

10

0

1

1

9 4

01 2

1 2

0

2

1 2=

+

=

+

=

xq

a( ) ( ) / ( / )

/

/,

1 1 2 2

12 1 1 2 1 2 4 0 0

0= + = + = = =

r a r( ) ( ) ( ) ( / ) , , , a

a 2 1 1 1

6 0= = =, [ ( )x

xr

r

T

11

2

12 1 2 2 4 4 2 6( )]

( )

( )[ / ][ ] ,

= = + = q b2 1 22 12 6 6 1= = =[ ( ) ]/ [ ]/ , x2

1

1

0

1

0

=

x

x

( )

( )

q

a

a2

2

2

2

1

1

2

1 2

0

6

0

1 2

1

2

5 2

6

( )

( ) / / /

=

+

=

e x n a k x n k p

f

k

p

p= + =

=( ) ( ) ( )

1

x n( ) = a kp

( ) =

p

n

p

fe n+

=

= 0

2

( )

E z A z X z A z a k zp

f

p p

k

p

p

k( ) ( ) ( ), ( ) ( )= = + ==

11

ep

f

a i a i a j ij j j j j+ +

+= + + 1 1 11( ) ( ) ( ).

-

7/29/2019 8579ch35

20/261999 by CRC Press LLC

35.4.1.5 (j+1) Order Coefficient in the Z-domain

35.4.1.6 (j+1) Order Error in the Z-domain

35.4.1.7 (j+1) Order of Error (see 35.4.1.6)

(inverse Z-transform of (35.4.1.6), j th order of backward prediction

error

35.4.1.8 Backward Prediction Error

35.4.1.9 (j+1) Backward Prediction Error

35.4.1.10 Single Stage of FIR Lattice Filter

SeeFigure 35.5

35.4.1.11 pth-Order FIR Lattice Filter

SeeFigure 35.6

Note:

FIGURE 35.5 One-stage FIR lattice filter.

FIGURE 35.6 pth-order FIR lattice filter.

A z A z z A zj j j

j

j+ + + = +

1 1

1 1( ) ( ) [ ( / )]( )

E z E z z E z E z z X z A z E z A z X z E z A z X zj

f

j

f

J j

b

j

b

j j

f

j j

f

j+

+

+ += + = = =11

1

1

1 11( ) ( ) ( ), ( ) ( ) ( / ), ( ) ( ) ( ), ( ) ( ) ( )

e n e n e nj

f

j

f

j j

b

+ += + 1 1 1( ) ( ) ( ) ejb =

e n x n j a k x n j k j

b

k

j

j( ) ( ) ( ) ( )= + +

=

1

e n e n e nj

b

j

b

j j

f

+ += +

1 11( ) ( ) ( )

e n e n x nf b0 0

( ) ( ) ( )= =

-

7/29/2019 8579ch35

21/261999 by CRC Press LLC

35.4.1.12 All-Pass Filter

which indicates that

is the output of an all-pass filter with input

35.4.2 IIR Lattice Filters

35.4.2.1 All-pole Filter

produces a response to the input (see Section 35.4.1

for definition).

Forward and Backward Errors

(see 35.4.1.7), (see 35.4.1.9). SeeFigure

35.7 for a pictorial representation of the single stage of an all-pole lattice filter. Cascadingp in such a

section we obtain the pth-order all-pole lattice filter.

35.4.2.2 All-Pass Filter

35.4.3 Lattice All-Pole Modeling of Signals35.4.3.1 Forward Covariance Method

35.4.3.1.1 Reflection Coefficients

,

< > = dot product,

FIGURE 35.7 Single stage of an all-pole lattice filter.

H z A z A z z A z a k z E z H z E zap p

R

p

p

p

k

p

p

k

p

b

ap p

f( ) ( ) / ( ) [ ( / )]/ ( ) , ( ) ( ) ( )= = +

=

=

1 11

e npb ( ) e np

f( ).

1 1

1

0

1

A z

E z

E za k z

p

f

p

f

k

p

p

k( )

( )

( )( )

= =

+

=

e nf

0( ) e n

p

+ ( )

e n e n e nj

f

j

f

j j

b( ) ( ) ( )= + +1 1 1 e n e n e njb

j

b

j j

f

+ += +

1 11( ) ( ) ( )

ej+1

(n)

j+1

z-1

b

ej+1

(n)f

j+1

*

ej(n)b

ej(n)f

ej+1(n)b

ej+1

(n)f

ej(n)b

ej(n)f

j+1

H z zA z

A zap

p p

p

( )( / )

( )=

1

j

f n j

N

j

f

j

b

n j

N

j

b

j

f

j

b

j

b

e n e n

e n

=

= =

=

1 1

1

2

1 1

1

2

1

1

( )[ ( )]

( )

,e e

e

e

jf

jf

jf

jf Te j e j e N = +[ ( ) ( ) ( )] ,1 L

e

j

b

j

b

j

b

j

b Te j e j e N = 1 1 1 11 1[ ( ) ( ) ( )]L

-

7/29/2019 8579ch35

22/261999 by CRC Press LLC

35.4.3.1.2 Forward Covariance Algorithm

1. Given j 1 reflection coefficients

2. Given the forward and backward prediction errors

3. jth reflection coefficient is found from 35.4.3.1.1

4. Using lattice filter the (j 1)st order forward and backward prediction errors are updated to form

5. Repeat the process

Example

Given

Initialization: Next, evaluation of the norm

.

Inner product between

.

From 35.4.3.1.1

.

Updating forward prediction error (set in 4.1.7): hence,

unit step function. First-order modeling error (35.4.1.2):

are zero. First-order backward prediction error (set j = j 1 in

4.1.9): or Second

reflection coefficient:

since Similar steps

Continuing and hence For finding ai

s see Section 35.4.1.

j

f f f

j

f T

=1 1 2 1[ ]L

e n e nj

f

j

b

1 1( ), ( )

e n e nj

f

j

b( ) ( )and

x n n Nn( ) , , .= < for e n e n nf b f2 1 3

0( ) ( ) ( ) .= = = and

jf j

= >0 1for all

f T

= [ ] .

0 0L

-

7/29/2019 8579ch35

23/261999 by CRC Press LLC

35.4.3.2 The Backward Covariance Method

35.4.3.2.1 Reflection Coefficients

The steps are similar to those in section 35.4.3.1: Given the first j 1 reflection coefficients =

and given the forward and backward prediction errors the jth reflec-

tion coefficient is computed. Next, using the lattice filter, the (j 1)st-order forward and backward errors

are updated to form the jth-order errors, and the process is repeated.

35.4.3.3 Burgs Method35.4.3.3.1 Reflection Coefficients

,

< , > = dot product, es are the forward and backward prediction errors.

35.4.3.3.2 Burg Error

.

The Burgs method has the same steps for computing the necessary unknown as in Section 35.4.3.1 and

35.4.3.2.

35.4.3.3.3 Burgs Algorithm

1. Initialize the recursion:

a)

b)

2. For j = 1 top:

a)

For n = j to N:

b)

c) ,

d)

j

b n j

N

j

b

j

b

n j

N

j

f

jf

jb

j

f

e n e n

e n

= = =

=

1 1

1

2

1 1

1

2

1( )[ ( )]

( )

,e e

e

b

[ ] ,

1 2 1

b b

j

b TL e n e nj

f

j

b

1 1( ) ( )and

j

B n j

N

j

f

j

b

n j

N

j

f

j

b

j

f

j

b

j

f

j

f

e n e n

e n e n

=

+

=

+

=

=

2 1

1

21 1

1

2

1

2

1 1

1

2

1

2

( )[ ( )]

[ ( ) ( ) ]

,e e

e e

j

B

j

B

j

f

j

f

j

B B

n

N

f b

n

N

e j e N e n e n x n= = + = = =

[ ( ) ( ) ][ ], [ ( ) ( ) ] ( )1 1 2 1 2 2 00

0

2

0

2

0

21 1 2

e n e n x n

f b

0 0( ) ( ) ( )= =

D x n x n

n

N

1

1

2 22 1=

= [ ( ) ( ) ]

j

B

j n j

N

j

f

j

b

De n e n=

=

2 11 1( )[ ( )]

e n e n e n e n e n e nj

f

j

b

j

B

j

b

j

b

j

b

j

B

j

f( ) ( ) ( ), ( ) ( ) ( ) ( )= + = +

1 1 1 11 1

D D e j e Nj j j

B

j

f

j

b

+ = 12 2 2

1( ) ( ) ( )

j

B

j j

BD= [ ]12

-

7/29/2019 8579ch35

24/261999 by CRC Press LLC

Example 1

Given unit step function. From Example 1 of (35.4.3.1.2)

.

Similarly, from Section 35.4.3.2

.

Therefore,

.

Update the errors:

Hence,

.

Zero-order error:

,

first-order error

.

x n u n n N u nn( ) ( ), , , , ( )= = = 0 1L

= = e e e0 02

2 0

22

2

1

1

1

1f b

N

b

N

, and

e0

2 22

2

1

1

fN

=

10 0

0

2

0

2 22

2

1

Bf b

f b=

+ = +

e e

e e

,

e n e n e n u n u n

e n e n e n u n u n

f f B b n B n

b b B f n B n

1 0 1 0 1

1

1 0 1 0

1

1

1 1

1 1

( ) ( ) ( ) ( ) ( ),

( ) ( ) ( ) ( ) ( ),

= + = +

= + = +

e

e

e e

1

2

2

1

2 4 22 1

2 2

1

2

2

1

2 22 1

2 2

1 1

2

1 1

11

1

1 11

1

1

f

n

N

fN

b

n

N

bN

f b

n

N

f f

e n

e n

e n e n

= =

+

= = +

=

=

=

=

[ ( )] ( )

( )

,

[ ( )] ( )( )

,

, ( ) ( )

( )

( )

== +

2 22 1

2 21

1

1( )

( ).

( )N

2

1 1

1

2

1

2

2

42 2

1

Bf b

f b=

+=

+e e

e e

,

0

0

22 1

2 22 2

1

1

2

1

B

n

N N

x n= =

=

+

( )( )

1 0 0

2

0

2

1

2

0

2

1

2 2 20 1 1 1 1 1B B f b B B N Be e N= = +[ [ ( )] [ ( )] ][ ] [ ][ ] ( ) / ( )

-

7/29/2019 8579ch35

25/261999 by CRC Press LLC

35.4.3.4 Modified Covariance Method

35.4.3.4.1 Normal Equation

modified covariance error

Example 1

Given data For second-order filter p = 2,

,

(a) insert-

ing values of from (a) and solving for a(1) and a(2) we obtain

Hence, the all-pole model has

35.4.4 Stochastic Modeling

35.4.4.1 Forward Reflection Coefficients

,

E stands for expectation.35.4.4.2 Backward Reflection Coefficients

35.4.4.3 Burg Reflection Coefficient

k

p

x x p x x

x

n p

N

r k r p k p a k r r p p

p r k x n k x n

=

=

+ = +

= =

10

1

[ ( , ) ( , )] ( ) [ ( , ) ( , )],

, , , ( , ) ( ) ( )],

l l l l

l L l l

p

M = = + + + =

r r p p a k r k r p p k x xk

p

p x x( , ) ( , ) ( )[ ( , ) ( , )]0 0 0

1

x n u n n Nn( ) ( ), , .= = 0 L

r k cx

n

N

n k n k

n

N

n k( , )l l l l= = ==

=

2 2

2 4

c N= [ ]/[ ],( )1 12 1 2 r r r r

r r r r

a

a

r r

r r

x x x x

x x x x

x x

x x

( , ) ( , ) ( , ) ( , )

( , ) ( , ) ( , ) ( , )

( )

( )

( , ) ( , )

( , ) ( , )

1 1 1 1 1 2 0 1

2 1 1 0 2 2 0 0

1

2

1 0 2 1

2 0 2 0

+ ++ +

=

++

,

r kx

( , )l a a( ) ( ) / ( ) .1 1 2 12= + = and

A z z z( ) [( ) / ] .= + + 1 1 2 1 2

j

f j

f

j

b

j

b

E e n e n

E e n=

{ ( )[ ( )] }

{ ( ) }

1 1

1

2

1

1

j

b j

f

j

b

j

f

E e n e n

E e n=

{ ( )[ ( )] }

{ ( ) }

1 1

1

2

1

j

B j

f

j

b

jf

jb

E e n e n

E e n E e n

=

+

21

1

1 1

1

2

1

2

{ ( )[ ( )] }

{ ( ) } { ( ) }

-

7/29/2019 8579ch35

26/26

References

Hayes, M. H., Statistical Digital Signal Processing and Modeling, John Wiley & Sons Inc., New York,

NY, 1996.

Kay, S., Modern Spectrum Estimation: Theory and Applications, Prentice-Hall, Englewood Cliffs, NJ,

1988.Marple, S. L.,Digital Spectral Analysis with Applications, Prentice-Hall, Englewood Cliffs, NJ, 1987.